I’ve always been a bit of a futurist. Seeing firsthand how AI is rapidly changing the way businesses interact with their customers and employees is an incredible experience. Artificial intelligence has given us superpowers – the ability to process vast amounts of data, recognize patterns, and automate complex tasks with remarkable efficiency. But it’s also admittedly a bit scary. What if AI takes my job? Are we using it in ethical and transparent ways? Can I trust AI model outputs?

As businesses continue integrating more AI systems and tools, the need for Explainable and Trustworthy AI is growing. Understanding what this means and how to achieve it is a vital step toward ensuring your models are transparent, accountable, and aligned with human judgment. The end goal is to ultimately enable your business to leverage AI’s full strengths while enhancing, rather than undermining, the experience of your employees and customers.

This blog explores three key considerations for Explainable AI in your business:

- Aligning Transparency with Business Needs

- Human-in-the-Loop with AI Assistants & Agents

- Establishing AI Principles for Long-Term Success

AI’s incredible potential can only be fully realized when its outputs are understandable and justifiable. Black-box AI models create significant risks, particularly in high-stakes industries that we work with regularly, such as finance, healthcare, and legal services. Transparency allows organizations to trust AI-driven insights and breaks down barriers to user adoption. This is where Explainable AI plays a starring role.

What is Explainable AI?

Explainable AI is an approach to AI development that aims to foster trust among users and align AI-driven outcomes with corporate values and compliance standards by ensuring AI models are transparent and accountable.

1. Aligning Transparency with Business Needs

A recent Zendesk CX Trends Report (2024)1 presented an eye-opening statistic: Three-in-four customer experience experts believe a lack of transparency is having a direct negative impact on customer churn. This isn’t a new concept, but it takes on a new flavor in the realm of AI.

I recall earlier in my career, sitting through a demo for a machine learning-based mortgage underwriting solution. The application analyzed incredible amounts of data and used sophisticated models to quickly categorize an application as “pass” or “fail”. It would then create a report full of complex statistical outputs that required an advanced knowledge of probability and statistics to understand. But what it lacked was the appropriate transparency and explainability that would have equipped a human underwriter with key points of emphasis for them to ultimately make the decision on a loan. It felt too black-box and user feedback was that it felt like their decision-making piece was being taken away.

It’s not that these advanced models aren’t appropriate for this type of use case – but they must come with a sufficient level of transparency and understanding for the people using them. Your level of required transparency will vary depending on your business and the use cases you wish to use AI for. A business case for an AI-driven chatbot that recommends local restaurants might have less stringent transparency requirements than an AI model that assists an HR department with hiring decisions. Gaining a holistic understanding of each use case allows us to apply principles of good governance, ensuring we can explain what data is included, what data is excluded, how that data is collected and stored, and how we’re preventing inherent biases.

Quisitive’s approach to AI Innovation through our comprehensive Design Lab framework ensures that we are proactively engaging with critical stakeholders at every level from the outset. Understanding their pain points and having open dialogue about the intended results of AI tools and systems helps allay fears that AI might “take their jobs” and instead focuses on how AI can further aid people in their work.

2. Human-in-the-Loop with AI Assistants and AI Agents

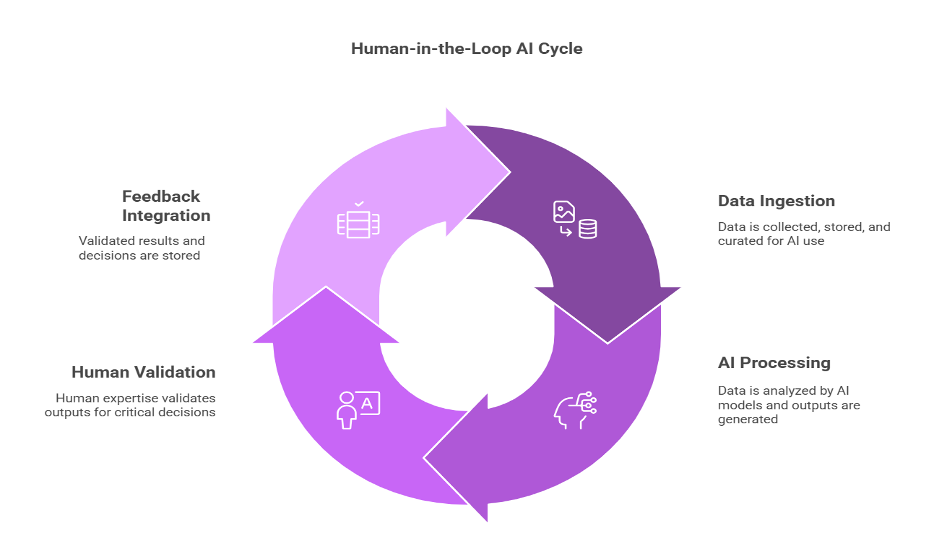

Expanding on this idea of using AI to enhance human expertise, we come to the “Human-in-the-Loop” (HITL) design approach. The general concept is to automate elements of a process using AI while building in key checkpoints where human review is conducted before proceeding further. In industries where precision and judgment are critical, AI should act as a powerful assistant rather than an autonomous decision-maker. We see this in the growing adoption of AI-driven assistants and Agentic AI.

Consider a healthcare organization evaluating an AI-powered diagnostic assistant that helps radiologists detect anomalies in medical imaging. These systems today already have impressive accuracy rates, but questions should still be asked: At what rate does the model erroneously flag images as high-risk (False positives) or low-risk (False negatives)? What underlying factors contribute to the recommendations? What’s the best way for us to use these AI-powered recommendations as part of a broader human-in-the-loop process?

AI is great at identifying patterns, but we still need qualified and informed humans to make final decisions. Human-in-the-Loop models ensure AI-generated insights are reviewed and contextualized by experts so appropriate actions are taken.

3. Establishing AI Principles for Long-Term Success

As organizations move beyond AI experimentation and into enterprise-wide adoption, a key differentiator between short-term wins and sustained success will be the presence of well-defined AI principles. Without clear and communicated guidelines, business units risk inconsistent implementations, ethical blind sports, and a disconnect between AI capabilities and organizational goals. AI leaders should strive to set standards early. Microsoft maintains guidance for their Responsible AI Standards2 covering core principles for designing, building, and testing AI systems.

The most effective AI strategies must start with these foundational standards and address key questions such as: How do we define Explainable AI in our specific use cases? What level of transparency is required for different applications? How do we ensure our AI systems are trustworthy and free from bias? And how do we continuously train employees to use AI responsibly?

These aren’t one-time considerations, but an ongoing effort that will require refinement as the AI landscape continues to shift and evolve with your business needs.

Need help establishing your business’s AI principles? Start with an AI Security Assessment, where experts guide you in ensuring ethical and responsible AI governance policies.

In Closing

Remember, it’s not just about getting AI to work. Ensuring it continues to work in a fair, explainable, and compliant manner over time is vital to scaling AI solutions in a way that maintains control and consistency.

At Quisitive, our AI Services embed these AI principles from day one, ensuring AI solutions are built with transparency, accountability, and long-term viability in mind. By engaging with you and your teams, we help organizations move beyond AI experimentation and into enterprise-wide adoption. Investing in these principles today lays the foundation for AI success that is not only innovative but sustainable and trustworthy.

;)